Voice AI is not a technology decision—it is a governance and risk decision.

- Voice introduces identity, emotion, and real-time context into enterprise systems

- It expands the attack surface beyond traditional data controls

- It forces organizations to answer questions they are not yet prepared to answer

The Illusion of AI Adoption

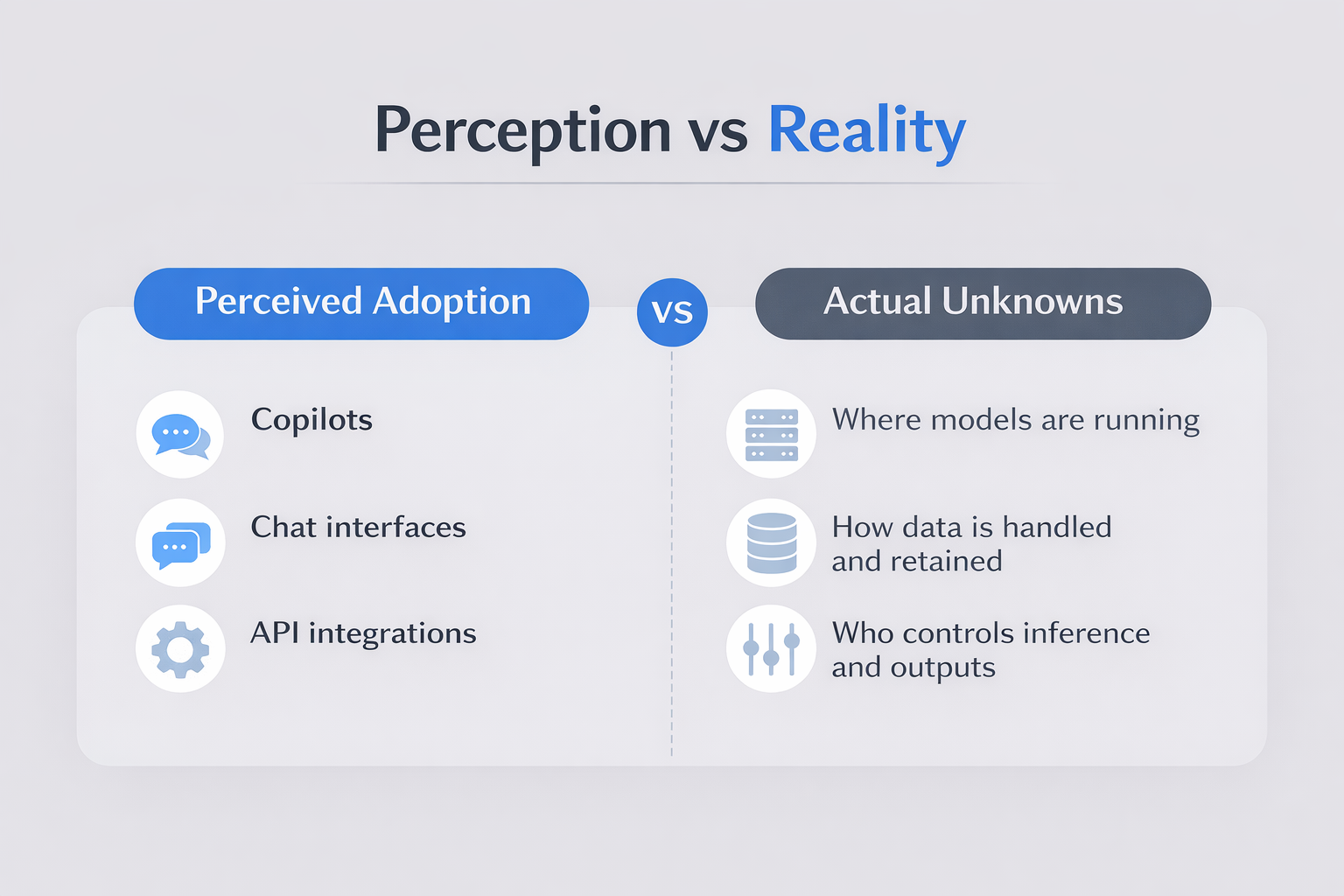

Insight: Most enterprises are adopting AI capabilities without understanding how they operate

Many organizations report that they are “using AI.” In practice, this typically reflects surface-level adoption:

Perceived Adoption

- Copilots

- Chat interfaces

- API integrations

Actual Unknowns

- Where models are running

- How data is handled and retained

- Who controls inference and outputs

This gap has been manageable in text-based use cases. With voice, it becomes a material risk.

Why Voice AI Changes the Risk Equation

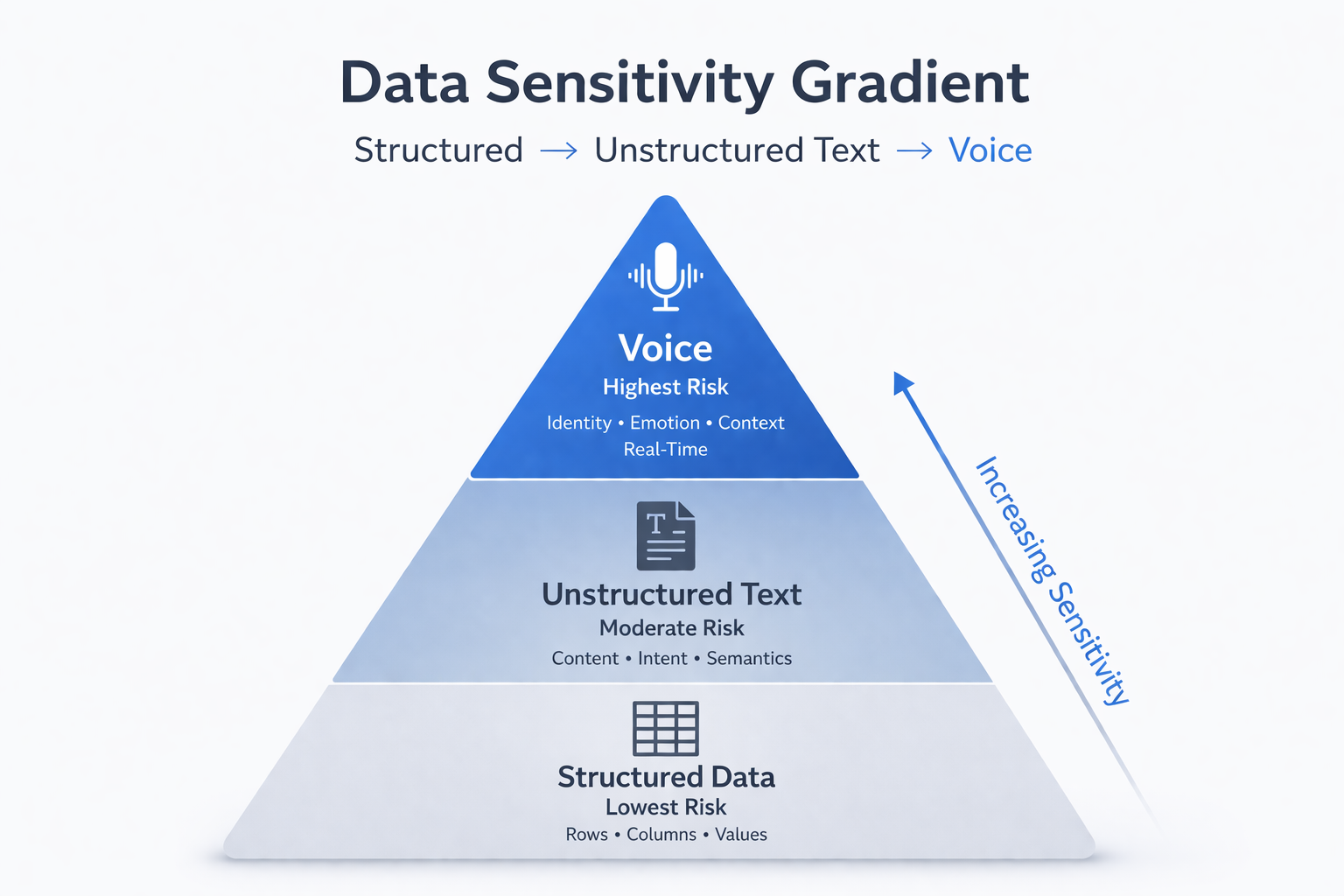

Insight: Voice collapses identity, emotion, and context into a single data stream

Voice is not simply another input modality. It is a composite signal that combines multiple dimensions of sensitive data:

- Identity (who is speaking)

- Emotion (tone, stress, urgency)

- Context (real-time, often unstructured interaction)

This fundamentally changes the enterprise risk profile.

A simplified view of data sensitivity:

- Structured Data → lowest risk

- Unstructured Text → moderate risk

- Voice → highest risk

Voice does not just increase risk—it concentrates it.

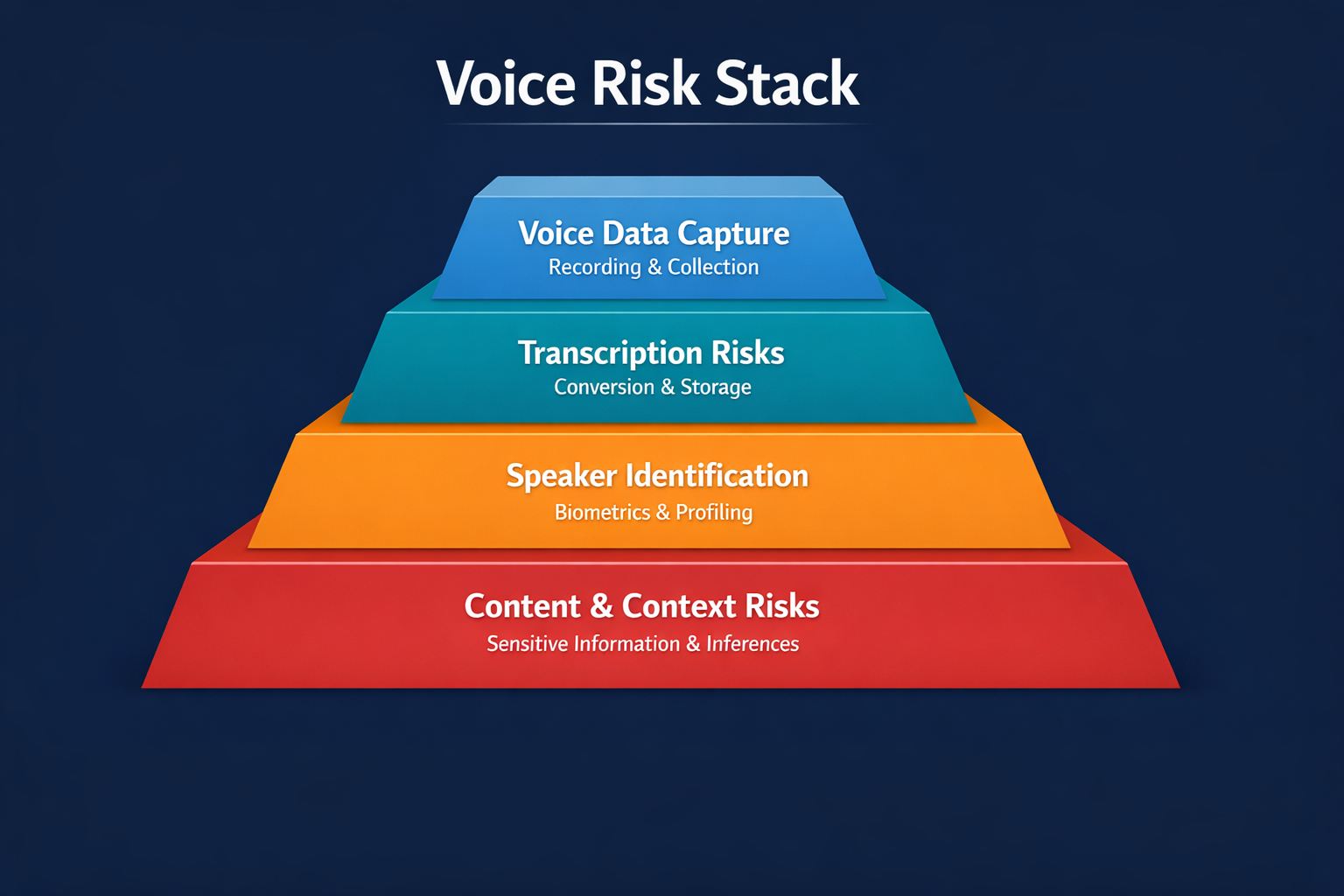

Voice Risk Stack

Insight: Voice aggregates multiple layers of sensitivity into a single stream

- Identity signals

- Emotional indicators

- Contextual meaning

- Real-time interaction

These layers are not independent—they are simultaneous. This makes voice uniquely difficult to govern using traditional controls.

The Sovereignty Problem

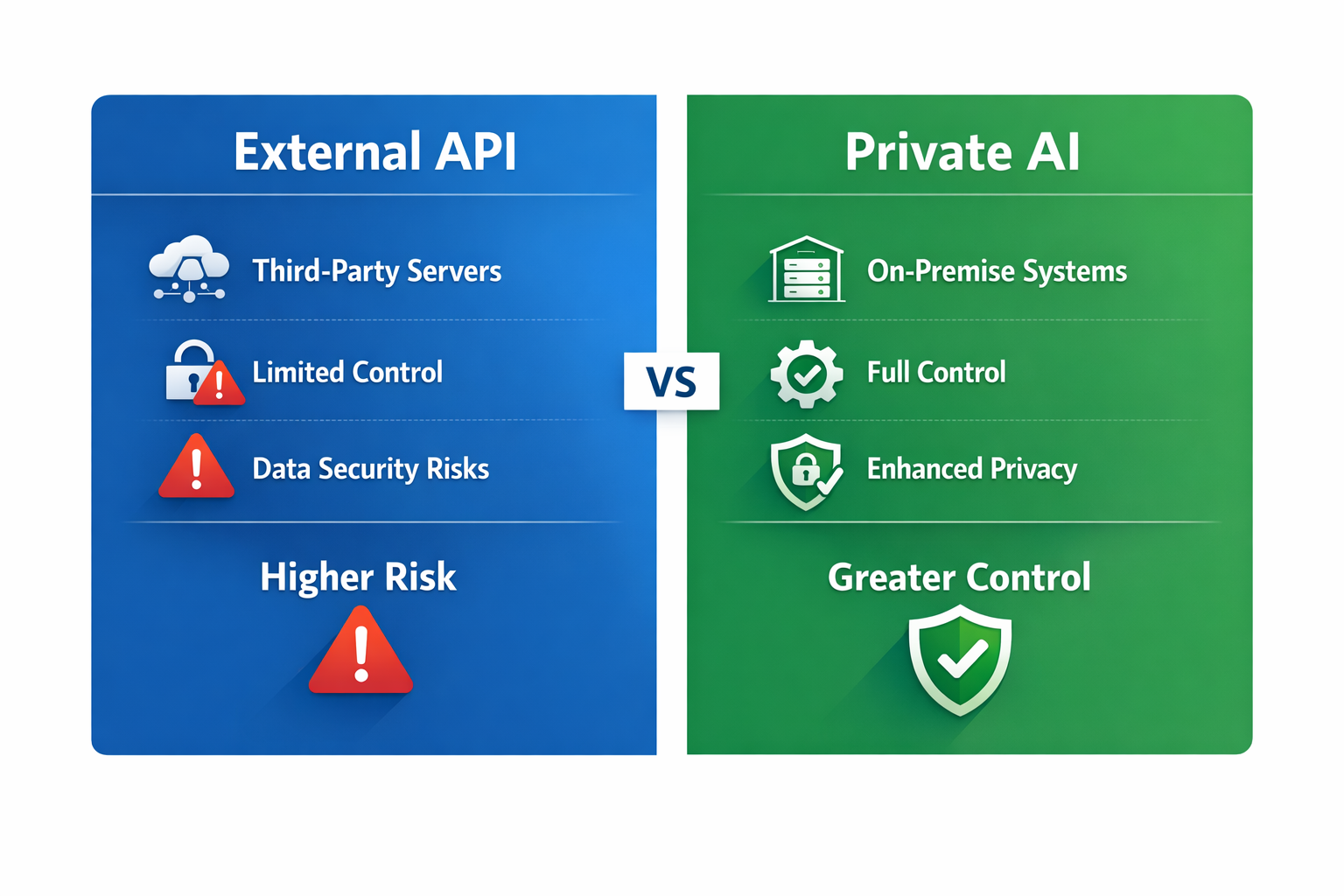

Insight: External AI consumption models introduce uncertainty that conflicts with enterprise control requirements

Most enterprise AI today is delivered through external APIs. While efficient, this model introduces structural risks:

- Unknown data handling and retention

- Shared, multi-tenant infrastructure

- Limited auditability of model behavior

For regulated industries, these are not technical concerns, they are compliance exposures.

Voice amplifies this challenge. Streaming real-time conversations through external systems raises questions many organizations cannot answer today.

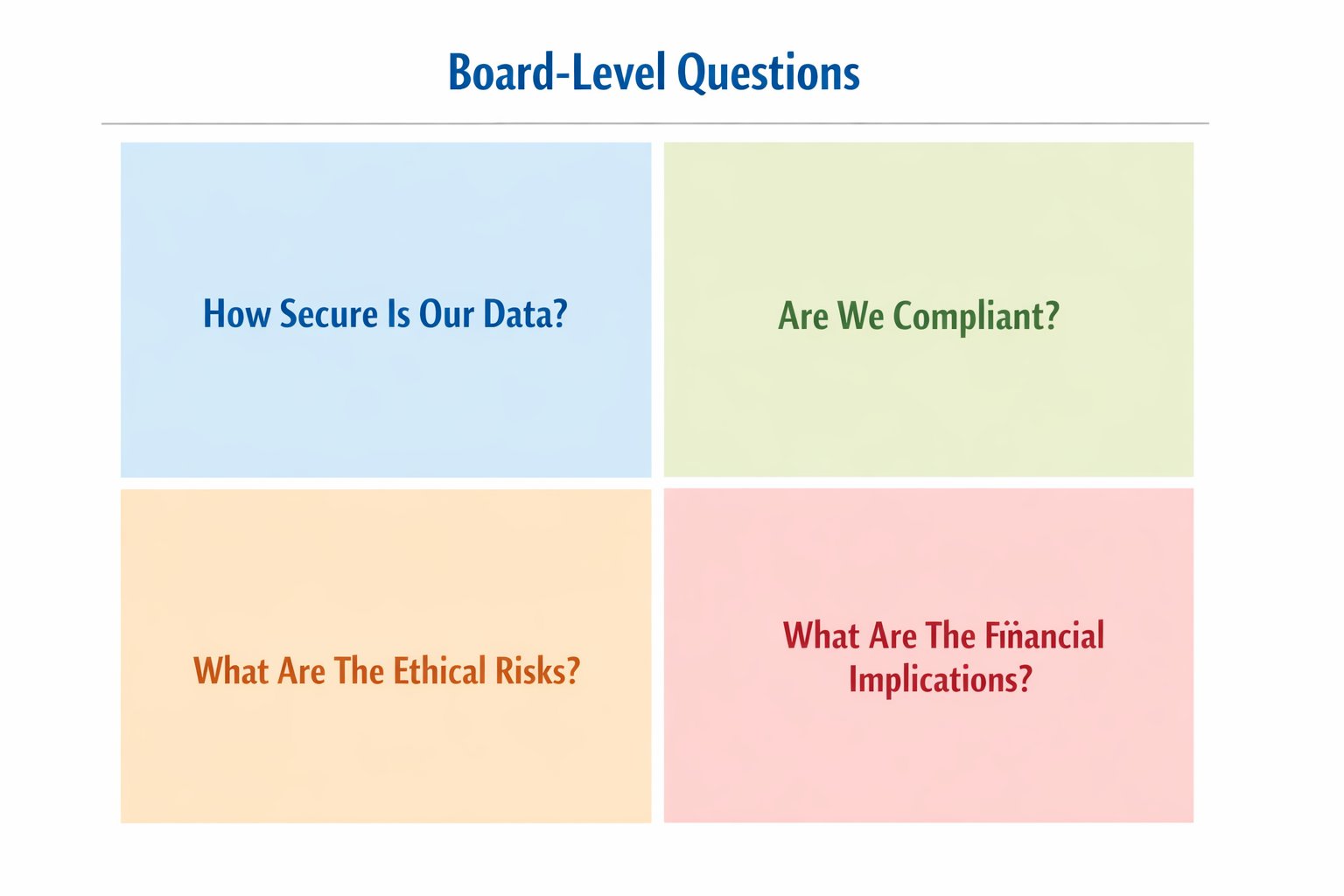

The Questions Boards Will Start Asking

Insight: Voice AI elevates technical questions into governance requirements

As deployment scales, executive and board-level scrutiny will increase:

- Where does voice data persist?

- Where does inference occur?

- Can model behavior be audited?

- Who owns the model and its outputs?

These questions mirror past inflection points in cloud, data, and cybersecurity, but voice consolidates them into a single capability.

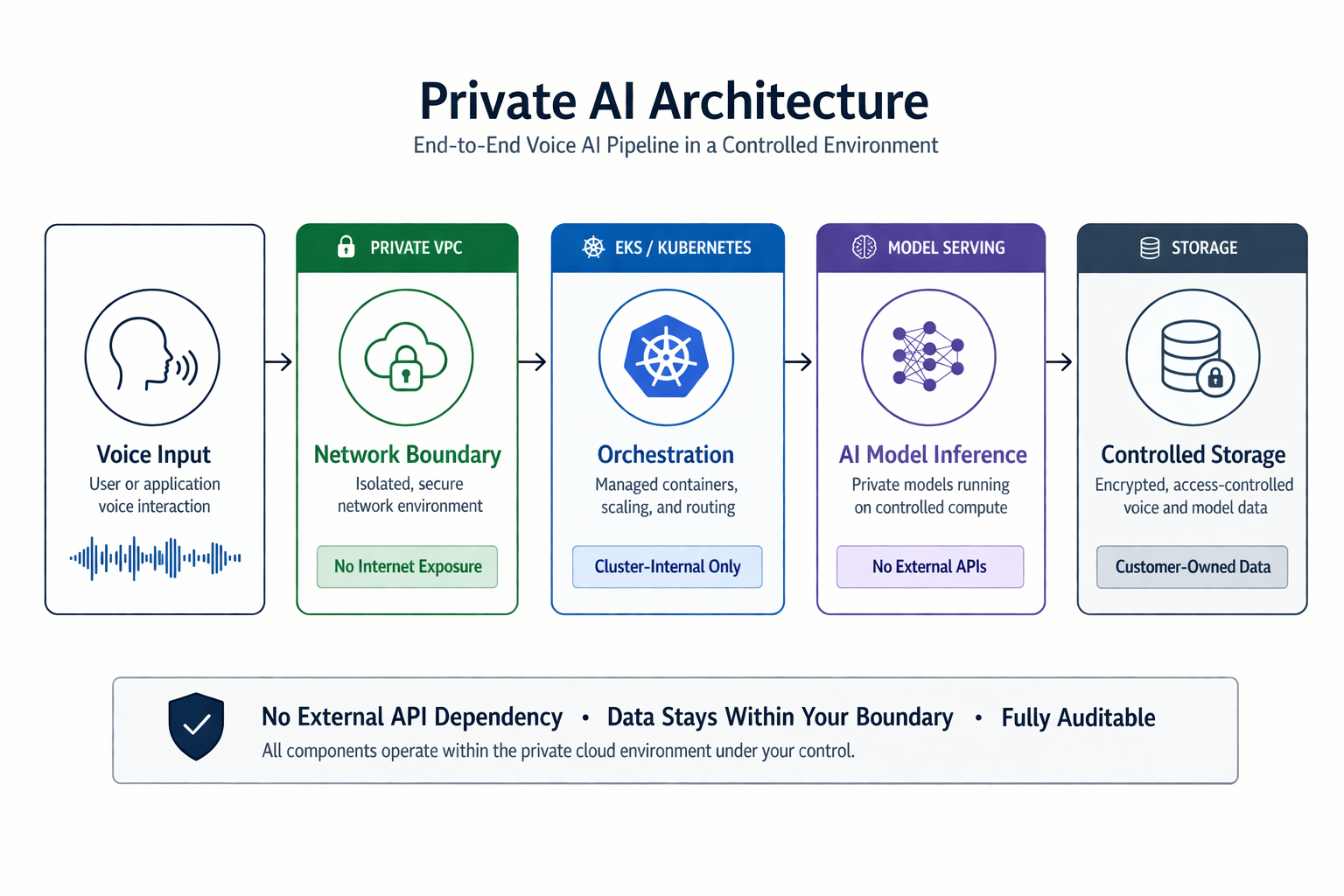

What Control Actually Looks Like

Insight: AI sovereignty is an architectural decision, not a policy statement

Control is not theoretical. It is implemented through system design.

A controlled voice AI architecture includes:

- Cluster-internal deployment (e.g., Kubernetes on AWS)

- Private inference pipelines

- Controlled storage of voice assets

- No dependency on external inference APIs

This model demonstrates that organizations do not have to trade capability for control.

AI sovereignty is the ability to run, manage, and govern AI within defined enterprise boundaries.

Cloud Does Not Reduce Control

Insight: Loss of control is a function of architecture, not cloud adoption

A common misconception is that cloud-based AI reduces enterprise control. In practice, disciplined architecture increases it.

Modern cloud environments enable:

- Isolated private network boundaries

- Fine-grained identity and access controls

- Encryption across storage and transport

- Defined lifecycle management for models and data

The distinction is critical:

- Consuming AI as a service reduces control

- Deploying AI as a system preserves it

Why This Matters Now

Insight: Voice removes interaction friction, increasing both speed and exposure

- Real-time interaction accelerates decision-making

- Reduced friction increases usage frequency

- Increased usage amplifies risk exposure

Organizations are not just adopting a new interface—they are introducing a new class of enterprise risk.

The Strategic Shift for Leadership

Insight: The AI question is shifting from capability to control

The leadership mandate is changing:

From:

Can we use AI?

To:

Can we control AI in a way that meets regulatory, security, and operational standards?

Organizations that can answer this confidently will move forward. Others will slow, not due to lack of capability, but lack of control.

Closing Perspective

Insight: Competitive advantage will be defined by controlled adoption, not speed alone

In regulated environments, success has never been defined by speed alone. It has been defined by the ability to adopt innovation without increasing unmanaged risk.

Voice AI introduces both opportunity and scrutiny.

This is not a future risk. It is a current governance gap.

The organizations that lead will not be those who adopt AI the fastest—

they will be those who can prove, with confidence, that they control it.